|

Sponsored Links: |

As part of a project, the tests should be planned to ensure that it complies with the expected results. It must be done within a reasonable time & budget.But planning for the check represents a special challenge.The objective of check planning is to select where the errors in a product or technique will be & then to design tests to find them. The paradox is, of work, if they knew where the errors were. They could fix without having to prove to them.The proof is the art of discovering the unknown & therefore can be difficult to plan.The usual, is basically naive replica should check "all" of the product. Even the simplest However, the program will defy all efforts to accomplish a coverage of 100% (see appendix).Even the coverage term itself is misleading because it represents a lot of possibilities. Do you means the code coverage, branch coverage, or input / output coverage? Everyone is different & each has different implications for the development & testing. The ultimate truth is that complete coverage of any kind is basically not possible (or desirable).So how is your proof?At the start of testing will be a (relatively) giant number of problems & these can be discovered with tiny hard work. Testing progress as increasingly hard work is needed to discover the following numbers.The law of diminishing returns applies & at some point investment to discover that last 1% of the problems is offset by the high cost of finding them. The cost of let the client or the client will find in factless than the cost of finding evidence.The purpose of planning control is therefore put together a plan to deliver the right tests in the correct order,to discover that plenty of of the problems with program as time & budget permit.

Risk Based Testing:

The risk is based on two factors - the likelihood that the problem occurs & the impact of problem when it occurs. For example, if a particular piece of code is complex, then introduce errors much over a code module. Or a special module code could be critical to the success of the overall project. Without that works perfectly, the product basically not deliver their expected results.Both areas should get more attention & more testing than less "risky" areas.But how to identify areas of risk?

Software in Many Dimensions:

It is useful to think of application as a multi-dimensional entity with lots of different axes. For example, one axis is the code the program, broken down in to modules & units. Another axis is the input of information & all possible combination. Yet a third axis could be the hardware that method can operate in, or other method application interface.Evidence can be seen as an attempt to accomplish 'coverage' that lots of of these avenues as possible.Recall that they are not looking for the impossible 100% coverage, but basically "better" coverage, verification of the function of all risk areas.

Outlining:

To start the planning method of testing a simple method of "outline" can be used:

1. A list of all the "axes" or areas of the application on a piece of paper (a list of possible areas could is below, but there's certainly over that).

2. Take each axle and break it down in to its component elements.

For example, with the axis of "the complexity of code" that would break the program down "physical" source components that comprise it. Taking the axis of "hardware"environment (or platform) that is distributed throughout the material as possible and application combinations that the product is expected to run on.

3. Repeat the method until you are sure you have covered as much of each axis as the possible (you can always add more later).This is a method of deconstruction of application components based on different taxonomies. For each axis basically a list of all possible combinations imaginable. His essay try to cover as plenty of elements as possible in as plenty of axes as possible.The most common beginning point for planning the check is based on a functional decomposition technical specification. This is an excellent beginning point, but it should be the focus of the only 'that is address - otherwise the check is limited to "check" but no "validation".Axis / Section Explanation The derivative of the functionality of the technical specification Code structure of the organization and the distribution of the source or object code Elements of the interface controls the user interface and elements of the program Internal interface interfaces between modules of code (traditionally high-risk) Interfaces External interfaces between this program and other programs Input space of all possible inputs All outputs can output space Physical organization of physical components application (media, manuals, etc.)Information storage and information elements Platform and operating technique environment, hardware platform The configuration elements of the modifiable configuration elements and their values Use Case Scenarios Each use case scenario should be considered as an element.

Test Case Identification:

The next step is to identify check cases that "exercise" of each of the elements of his method.This is not a one-on-one relationship. Lots of tests may be needed to validate a single element of its schema as well as a single check can validate over two point on an axis. For example, a single while the check could validate the functionality, the code structure, an element of the interface and error handling.In the diagram shown here, two tests have been highlighted in Red: "A" and "B".

Each represents a single check has been verified that the behavior application on the two axes in particular "Input Information space "and" hardware environment.For each point on each axis of your method to pick on a check which will exercise this functionality. Note that if check validate a different point in a different axis and will continue until you are satisfied you have all points covered in all axes.For now, do not worry much about the details of how two could prove any point, basically pick "what" is to be tested. At the finish of this exercise should be a list of check cases that represent near you-a-perfect coverage of 100% of GDP.

Test Case Selection:

Since they recognize that they can not accomplish 100% coverage, they must now take a critical look at our list of check cases. They have to select which are most important, which will exercise risk areas and which will find the most errors.But how?Have another look at the diagram above - they have five of our lines represented here, "the input information space "and" hardware environment. "We have five events" A "and" B "and have dark spots,that denote errors in the program.Bugs tend to cluster around four or more areas within four or more axes. These define the areas of risk in the product. Perhaps this code section was completed in a hurry or perhaps this section of the "input space" was difficult to treat.Whatever the reason, these areas are inherently more dangerous, more likely to fail than others.They may also note that a check has found no error has been limited to verifying the behavior of the program at that point. Exhibit B on the other hand has identified a problem distinctive to a group of similar errors. By concentrating the efforts of the evidence around the area B will be more productive, because more more errors that were discovered here. A centering around probably won't produce any significant results.When selecting check cases that you ought to try to strike a balance.Your aim should be to provide broad coverage for most of his process of "hubs" and profound coverage of uncovered areas of greatest risk. Wide coverage means that an element of the process is evaluated in elemental form, while the deep coverage involves a series of repetitive superposition of check cases that make use of all the variations in the item under check.The aim of comprehensive coverage is to identify risk areas and the approach to deeper coverage of the areas to eliminate most of the issues. This is a difficult balancing act between trying to cover everything and focusing its efforts on areas that need further attention.

Test Estimation:

Once you have prioritized check cases two can estimate the time each case is to be executed.Take each check case & make a rough estimate of how long you think you need to generate the appropriate input conditions, run application & output. There's four check cases similar but can approach this step by the assignment & average execution time of each case & multiplied by the number of cases.Total of hours & you have an estimate of the evidence that the piece of application.You can then negotiate with the project manager or product manager for the proper budget to run the check. The final value is reached depends on the answers to a quantity of questions, including: how deep are pockets of your organization? that the fundamental mission is to process? what matters is the quality of the company? How reliable is the method of development?The number is certainly lower than expected.In general - more iterations are better than most tests. Why? Developers are not correct a mistake in the first attempt. Errors tend to cluster & find you can find out more. If you have a lot of mistakes review that will have to take multiple iterations to retest the solutions. & finally, & honestly, in a mature application development work, the evidence suggests that there is a lot of mistakes!

Refining the plan:

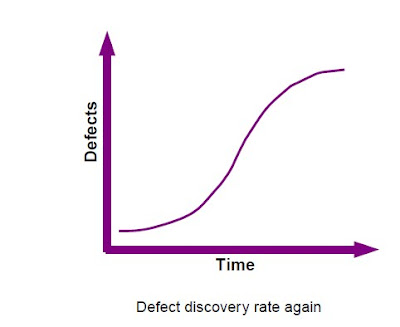

As you progress through each cycle of testing can refine your check plan.Usually, not during the early stages of testing plenty of defects are found. Then, as evidence of the striking step, defects start coming faster and faster until the development team get on top of the problem and the curve starts to flatten. As future development and as evidence defects moving down a new check, the number of new defects is reduced.This is where the risk / reward begins to bottom out and you may come to the limits the effectiveness with this particular form of proof.If you plan to perform more tests or more time obtainable,

now is the time to shift focus to a check different point in strategy outline.Cem Kaner said it best: "The cases are the best proof that find bugs." A check case that is Fear not worthless, but is obviously worth less than a check case that finds no problems.If evidence is not to find any mistakes then perhaps you ought to look elsewhere.Conversely, if the check is to find a lot of issues that should pay more attention to it - but notthe exclusion of everything else, there is no point in setting a single area of application!His cycle of improvement should be directed to discard useless or ineffective tests and

divert attention to more fertile areas for evaluation.Moreover, referring to his increasingly original method, will help you keep track of timber for of trees. While the issues important finding is that you can seldom be sure to find what can not assume the problems they are encountering are the only ones there. You must maintain a continuous level of evidence of an active coverage to give an overview of the application while

approach of check coverage deep in the trouble spots.

Summary:

1. Decompose the program in a series of "hubs" that represent different aspects of system under check

2. Furthermore decompose each axis of the programs in sub-units or "elements"

3. For each element in each axis, to choose how they will prove

4. Prioritize the tests based on your best knowledge available

5. Estimate the hard work necessary for each check & draw a line through his list of evidence init thinks fit (based on your schedule & budget)

6. When you run your check cases, you can alter your plan based on the results. Focus on areas that show most of the defects, while maintaining broad coverage of other areas.Your intuition may be your best mate here!Extreme code can often be identified through "symptoms" as unnecessarily complex,historical

defect cases, the voltage under load & code reuse.Use historical information you have, the opinions of experts & finish users & common sense. If people are nervous about a particular piece of code, you ought to be .If people are being evasive about a particular function, the check one time. If people dismiss what you think is a valid concern, pursue it until it is clear & finally, ask the developers.They often know exactly where the errors are hidden in its code.

No comments:

Post a Comment